Europe strategy to remain ahead of competition

Future products, business models, industrial processes and companies are being built on the digital transformation. It is often said that information and knowledge are power and “out-compete is out-compute”.

This is even more true with Exascale investments.

The ability to collect, analyze and use data at a scale that is nearly inconceivable leads to a new generation of computers. With millions of heterogeneous cores computers will provide extreme performances and deliver complex achievements.

High Performance Simulation opens new opportunities not only for research but also for a large spectrum of industrial sectors and services such as Energy, Aeronautic, Finance or Health. The potential impact of exascale is enormous from a scientific and technical point of view but also in terms of economical, societal and environmental impact.

Exascale is therefore a game-changing technology that pushes the frontiers of science and commerce in virtually every discipline and sectors.

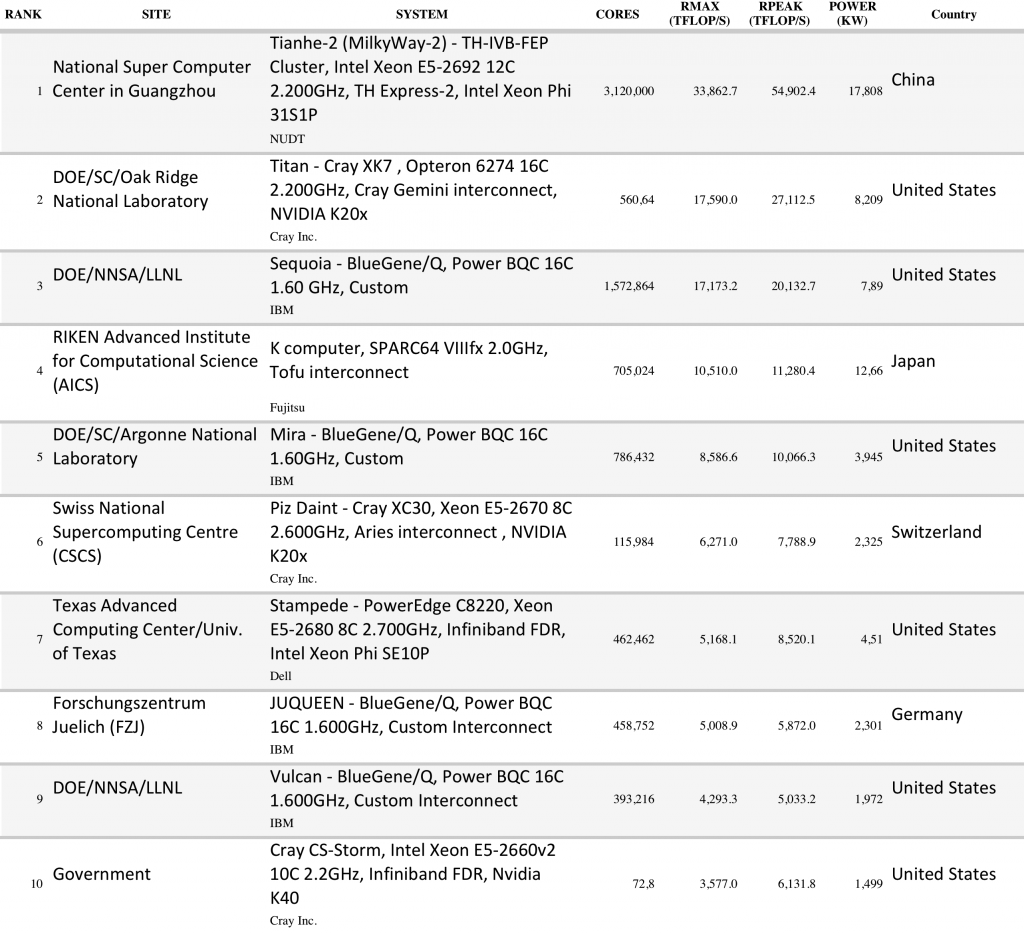

Most of current supercomputers in the world are based in China and the United States.

The first European supercomputer is at Juelich, a historic EESI partner. This powerful infrastructure ranks #8 in the Top500 list.

The sustainability of Europe in terms of strategic, industrial or scientific competitiveness is at stakes.

Source: TOP500.org

Application challenges

Supercomputers are used for high calculation-intensive tasks. Typical applications relate to solve problems involving quantum physics, weather forecast, climate research, molecular modeling, or physical simulations

Biological macromolecules, polymers, and crystal require to compute chemical compound structures and properties. Designing green airplanes needs simulations in wind tunnels.

Advancing nuclear fusion research or understanding detonation of nuclear weapons force simulations.

A particular class of problems, known as Grand Challenge problems, are problems whose full solution requires semi-infinite computing resources.

The benefits of Exascale investments for Europe are numerous. They have been described in EES1 findings and are confirmed in EESI2 studies. They tackle grand societal challenges, improve national economies and global competitiveness, and make humankind progress.

Economy

The 21st century has entered into the digital revolution. Billions of people, educated, equipped to satisfy most of their needs, are now connected.

The multitude of information, problems, interconnections are transforming past social and economical order.

Leading companies are no more driving alone their industry sector. Technology companies, administrations, service companies, associations, people but also objects can cooperate in the jest of an eye to capture and deliver value.

Most of industries require new applications: prototyping new aerodynamic automobile or airplane, inventing pharmaceutical drugs, microprocessors, computers, monitoring implantable medical devices, managing golf clubs, and household appliances.

Beyond products industrial-business processes have to change e.g., finding and extracting oil and gas, manufacturing consumer products, modelling complex financial scenarios and investment instruments, planning store inventories for large retail chains, creating animated films, and forecasting the weather.

Considering the exponential growth rate of such multitude it is clear that the capacity to process at a new scale, in the range of exascale, is a key success factor of Europe Economy.

Innovation

Innovation is a key objective driving Europe strategy to strengthen the worldwide position of its industry and academia.

97% of HPC users consider it indispensable for their ability to innovate, compete, and survive.

Political and organizational leaders are increasingly recognizing HPC’s crucial value for driving innovation and competitiveness.

Commenting on the EU Innovation Union, launched in October 2010, Robert-Jan Smits, Director General for Research and Innovation of the European Commission, noted that:

“…research and innovation are key strands of the Europe 2020 strategy. Stark figures confront this ambition to use knowledge as a driver for sustainable growth. Albeit with large internal variations, Europe consistently spends less than 2 per cent of GDP on research and development, only two-thirds of that in the US and a little more than half the Japanese figure. Meanwhile, China’s investment is growing year by year and will be on a par with Europe in a few years. The EU Innovation Union Scoreboard tells a similar story: a big innovation gap with Japan and the US, with China (not to mention India and Brazil) quickly catching up.”

Other examples:

- In his 2006 State of the Union address, U.S. President George W. Bush promised to trim the federal budget, yet urged more money for supercomputing. President Obama also mentioned supercomputing prominently in his 2011 State of the Union address.

- In 2009, Russian President Dmitry Medvedev warned that without more investment in supercomputer technology, Russian products “will not be competitive or of interest to potential buyers.”

- In June 2010, Rep. Chung Doo-un of South Korea‘s Grand National Party echoed that warning: ―If Korea is to survive in this increasingly competitive world, it must not neglect nurturing the supercomputer industry, which has emerged as a new growth driver in advanced countries.

Industry is in the midst of a new, 21st century industrial revolution driven by the application of computer technology to industrial and business problems. HPC already plays a key role in designing and improving many industrial products — including automobiles, airplanes, pharmaceutical drugs, microprocessors, computers, implantable medical devices, golf clubs, and household appliances — as well as industrial-business processes (e.g., finding and extracting oil and gas, manufacturing consumer products, modeling complex financial scenarios and investment instruments, planning store inventories for large retail chains, creating animated films, and forecasting the weather).

HPC users typically pursue these activities with virtual prototyping and large-scale data modeling – that is,using computers to create digital models of products or processes and then evaluating and improving the design of the products or processes by manipulating these computer models. Given their broad and expanding range of high-value economic activities, HPC users are increasingly crucial for industrial and business innovation, productivity, and competitiveness.

With EESI, ETP4HPC, PRACE to name few, European Commission recognizes the crucial role of HPC to innovation.

The Council asked for a further development of the European High Performance Computing Infrastructure. It recommends to pool national investments and efforts in HPC to succeed in the use, development and manufacturing of advanced computing products, services and technologies.

Environment

Climate change, pollution and other environment issues are becoming urgent issues given the societal and economic impact it has.

Environment is studied through a wide range of disciplines commonly referred as Earth Sciences. They encompass the study of the atmosphere, the oceans, the biosphere to issues related to the solid part of the planet.

Earth Sciences address many important societal issues from weather prediction to air quality, ocean prediction, climate change to natural hazards such as seismic and volcanic hazards. As example, climate characteristics determine what agricultural crops can grow at some place, where people can live, or how cities and industries can develop. Forecasting climate change is crucial to determine its impact and help planning industrial or societal activities.

Among all issues WCES concentrates on two main domains, on the one hand weather and climate, which share some similarities, and on the other hand solid earth.

Climate evolution is driven by both anthropogenic forcing (greenhouse warming) and by natural fluctuations.

Most of the work done up to now has been devoted to improving the description of greenhouse warming and to evaluate its impacts. On the other hand natural climate variability is driven, among others, by oceanic fluctuations which take place in the Atlantic and/or in the Pacific, with characteristic time-scales of a few years to a few decades.

Besides improving the description of greenhouse-induced climate change and its course over the next decades and centuries, what we call climate predictions, it will be more and more important to forecast what detailed climate will occur in 5 years, or 10 years, of 30 years in the future, because such climate characteristics are crucial for planning most of the industrial and societal activities.

Weather prediction, air quality, ocean prediction, climate change to natural hazards such as seismic and volcanic hazards, are becoming prevalent activities where high performance computing is a necessity.

The above representation is the result of a spatial resolution of 25 km cloud and convection processes simulation

As a step forward to reaching the longer-term, 1-km resolution, this simulation has been performed during the UPSCALE project

using the Met Office Global Atmosphere Unified Model on the PrACE Tier0 machine Hermit

Health

In the recent years human health has been tremendously improved thru knowledge acquired by simulations. Computational biology and bioinformatics help understand the mechanisms of living systems.

In the pharma industry, people can benefit of more efficient drugs. As an example, the FDA approved 39 new drugs in 2012, a significant increase of the average over the last 20 years. There are recent success cases of computer-aided drug design in Pfizer, Eli Lilly, GlaxoSmitKline, or Sanofi.

In the genomics area, the identification of 80% of the components of the human genome now have at least one biochemical function associated with them. Such progress, together with molecular simulation or organ simulation will lead to humankind advances, such as customised drugs.

In 2012, Europe has funded the Human Brain project, a major 1B$ investment to develop a working model of the entire brain. On their side, the USA announced in April 2013 a competitor project ($100 million in 2014) called the Brain project. The effort will require the development of new tools not yet available to neuroscientists and, eventually, perhaps lead to progress in treating diseases like Alzheimer’s and epilepsy and traumatic brain injury. It will involve both government agencies and private institutions.

With the recent advances in this area (e.g. next generation of DNA sequencing instruments) the generated data is becoming larger and more complex. Exascale will facilitate more progress.

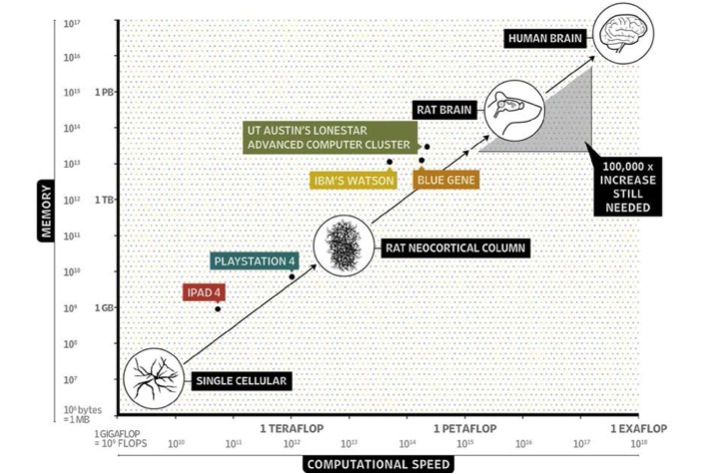

The above graphics shows the drastic change in order of magnitude

between the simulation of a simple cell and of an entire organ such as the human brain.

Education and cooperation

The advent of ExaScale computing marks a fundamental paradigm shift in science and technology. Achieving efficient Exascale computing is a matter that goes far beyond the research community. Capitalizing on ExaScale computing requires a rich, vibrant, and creative eco-system out of which the future innovative and potentially disruptive use of ExaScale can be developed.

The universality of high end computing within all branches of computational science and engineering poses a new challenge to education.

Currently, the training of scientists and engineers has deficits in teaching the knowledge that is necessary to exploit the opportunities created by ExaScale computing.

In the near future, the importance of computational methods will increase dramatically. ExaScale systems will be able to devise computational models for phenomena and processes of unprecedented complexity. Simulation based techniques will be the key to analyse and answer research problems in all fields of science, and they will be the primary tool to predict and control and thus help to design better products and to enable a sustainable development.

Only scientists and engineers who are trained to use these methods effectively, will be able to exploit this potential.

The situation in ExaScale computing is characterized simultaneously by a constellation of unequalled opportunities and a high risk of failure. With a vigorous technology race ongoing, Europe is in danger of falling behind.

A careful in-depth analysis reveals that the essential bottlenecks are not only in securing sufficient funding levels for installing and operating ExaScale systems. Europe is still in a leading position in training and educating scientists and engineers. However, at this time, only hesitant first steps have been taken towards adopting HPC as a key technology. At this time, university education in Europe is not well positioned to address Exascale challenge.

Obstacles are the traditional disciplinary boundaries, since using ExaScale computing requires a deeper synergistic collaboration across several disciplines in engineering, science, mathematics, and computer technology.

There is a need to define a new kind of scientist trained in more than a single one of the classical disciplines.

There is a need to develop the educational profile of “computational scientists” or “computational engineers”.

For this, the core competences out of several contributing fields must be combined, plus a sufficiently deep specialization so that these new scientists can work at the leading edge of research.

Science challenges

Massively parallel systems engage the HPC community for the next 20 years to design new generations of applications and simulation platforms.

The challenge is particularly severe for multi-physics, multi-scale simulation platforms.

Combining massively parallel software components that are developed independently from each other is a main concern. Other roadblock relates to legacy codes, as codes constantly evolve to remain at the forefront of their disciplines.

Peta or Exa scale computers will meet the challenges with new numerical methods, code architectures, mesh generation tools or visualization tools. Beyond applications, all software layers between the applications and the hardware need to be revisited.

The 4 scientific and technical recognised challenges

Scalability: none of runtime environment allows application execution on 1 million of nodes. There is no known solution to launch 1 million of processes on large scale machines in less than 5 minutes.

Fault tolerance: a must to run applications on 1 million of nodes for hours.

Two significant issues:

- the Mean Time Before Failure of very large computers is rapidly diminishing and will soon equal the time required for fault tolerance systems to simply restart applications

- the exponential increase of the number of transistors and their exponential reduction in size with time, will significantly increase the number of “masked errors” that could be not detected by any system (silent soft errors).

Programming approach: the programming environment should deal with hierarchy, heterogeneity, flexibility and help the developer make the program scalable.

Other challenges: overhead, computational precisions, energy saving, etc.

Enabling Technologies

Since the first comprehensive study on the HPC challenges issued by the US Defense Advanced Research Project Agency (DARPA), 4 major challenges to achieving Exascale have been reported:

- The Energy and Power Challenge: no combination of currently available technologies will be able to deliver HPC systems at connected wattages well below 100 MW.

- The Memory and Storage Challenge: state of the art technologies do not have yet the necessary maturity to fulfil the I/O bandwidth requirements within an acceptable power envelop.

- The Concurrency and Locality Challenge: future applications may have to support more than a billion of separate threads in order to efficiently use the hardware.

- The Resilience Challenge: systems become increasingly sensitive to operating environments given the component counts for supercomputers and operating points at low voltage and high temperature.

The EESI2 experts have identified several technologies that address the main roadblocks to build the proper software infrastructure for exascale systems.

Cross cutting issues recommendations

Scope: while EESI experts were investigating Applications (WP3) and Enabling Technologies (WP4) they identified issues that are transversal to the different activities.

Given the importance of those topics, the structure of the project has been adapted to integrate join efforts into a specific work package.

Indeed five cross cutting issues will have an impact on the Exascale roadmap:

- Data management and exploration

- Uncertainties (UQ/V&V)

- Power & Performance

- Resilience

- Disruptive technologies

Challenges:

- transformational algorithms to address extreme concurrency, asynchronous parallel data movement and access patterns

- advanced data analytics algorithms

This area is still being investigating in terms of state of the art, and gap analysis.

Findings are expected to be produced by the end of the EESI2 project in May 2015.